Abstract

This paper aims to investigate the impact of lethal autonomous weapon systems on the ethical aspects of warfare. The fundamental stance of this inquiry is that moral deskilling is a tangible threat due to the fact that autonomous weapons will instigate a slacking-off within the military cadres, which in turn will cause the loss of military ethics over a longer period since many of those military rules are virtues normally acquired via repetition on the job practice. The minimal survival of military virtues with little substance remaining after such a moral deskilling is what is at issue, not a one-time transgression of humanitarian law.

Humanity is still at pains to fully comprehend the economic, political, environmental, cultural, and moral ramifications of the significant changes that modern technologies have brought about in human practices and institutions around the world (Heuser et al., 2025; Vallor, 2014b). One of these changing practices is occurring in the military realm. There is a very strong call for change by military pundits as well as by the security establishments of a considerable number of powerful states, in favour of automation (Alwardt & Schörnig, 2021, p. 298). In order to cope with the changing ethical conditions triggered by the development and widening use of artificial intelligence (AI) in almost every compartment of society, numerous ethics frameworks, studies, principles, and guidelines have been proposed (Boshuijzen-van Burken et al., 2024).

One of the most important relevant debates in the last decade has been the one on lethal autonomous weapon systems (LAWS) (Santoni de Sio & van den Hoven, 2018; Vallor, 2014a). LAWS are seen as the third revolution in military technology, following gun powder and nuclear weapons. It is obviously a very hot issue on the political and military agendas of many states including but not limited to the USA, Russia, and China (Bode, 2024; Haner & Garcia, 2019; Herzfeld, 2022). Militaries all around the world use technology primarily to accomplish strategic military goals and as a vital tactic in times of conflicts, to inflict "as much damage on the enemy while trying to risk as few personnel and resources as possible" (Coeckelbergh, 2013; de Vries, 2023, p. 26). In the same vein, LAWS allow their operators to accelerate their military decision-making cycles with unimaginable speed and accuracy (Arai & Matsumoto, 2023, p. 451; Emery, 2021; Frølich & Mansouri, 2021, p. 50; Herzfeld, 2022).

This paper aims to tackle the significant ethical question of moral deskilling, which may be instigated by an increasing dependence upon LAWS in military deployments. Moral deskilling can be described as “the potential degeneration of human judgemental capacity due to AI dependency” (Kim, 2025) or as “removing human ethical know-how in areas of paramount ethical concern” (Hovd, 2023, p. 1). It has been a much-observed phenomenon in connection with the automatization of production stages in different industrial or service sectors. This paper’s main research question is whether moral deskilling is relevant for the military complex, especially within the framework of human-LAWS interactions. To this end, two case studies will be examined, albeit only the latter one involves an intense deployment of LAWS or similar autonomous systems, especially decision support systems.

For the purposes of this inquiry LAWS are to be defined as a “weapons system that can independently select and attack targets” (Park, 2020, p. 397). In accordance with this definition, LAWS apply force following a machinal analysis of the environment through sensors with little or no human involvement (Bode, 2024). The military functions that have typically been performed by human soldiers are being taken over by those systems (Sati, 2023). Within the framework of this study, an ethical process is one in which individuals use their own moral base to ascertain if a certain type of action or omission is right or wrong (Poszler & Lange, 2024). In accordance with this conceptualization, the delegation to LAWS of such decisions to kill someone justifies an ethical scrutiny with a view to finding out what kind of moral ramifications this delegation might reason.

This inquiry will start with an undertaking of shedding some light upon a very immediate outcome of increasing autonomy in critical military functions, i.e. targeting and engaging the enemy. This outcome is the systemization of the annihilation of those who happen to be at the receiving end of an autonomous attack. Systematization of killings is inevitable and unavoidable, barring a total ban on LAWS. This specific part of the paper will depend on Kelman’s approach to the issue of “violence without any moral restraints” (Kelman, 1973). Central terms in Kelman’s approach, like routinization and dehumanization, will help us to better grasp the risks to be caused by moral deskilling.

Some of the most catastrophic events in contemporary human history, such as colonial conflict, ethnic cleansing, and genocide, have involved the systematic use of violence (Renic & Schwarz, 2023). Killing via LAWS is always a systematic form of violence and each component in the work-cycle of these systems is prone to technification (Renic & Schwarz, 2023). This tendency or proneness is apt to put each and every humanitarian restraint in great jeopardy (Emery, 2021; Renic & Schwarz, 2023). Therefore, it would be a very justifiable concern to reiterate that those favourable opinions about the dependability of autonomous weapons in terms of their compliance with humanitarian rules and regulations are not particularly strong nor are they convincing (Güneysu, 2024). Due to this dangerous nature of LAWS, numerous actors have criticized LAWS, and some have even called for an outright ban on such systems (Wood, 2024).

H.C. Kelman’s approach to sanctioned massacres could be an efficient key to understanding how the technified killing by LAWS is not different from those mass murders committed by the rationally organized Nazi bureaucracy. Kelman aims to tackle the problem of sanctioned violence especially against civilians and others with no willingness or capacity to fight back. At some point restraints against wanton destruction and violence lose their strengths (Kelman, 1973). Kelman identifies three distinct factors that lead to this loss of restraint: authorization, routinization and dehumanization. All these play a similar pro-violence role in a LAWS-related setting.

LAWS, when deployed continuously, may pave the way for the emergence of an understanding that they constitute a new authority figure, which is responsible and trustworthy (Johnson, 2023). LAWS obviously do not suffer from human weaknesses (de Vries, 2023, p. 27; Emery, 2021). They do not get tired or hungry, nor would they lose their control following the loss of a comrade. In addition, LAWS do not have the urge of self- preservation. Especially in structured and isolated settings, LAWS fulfill their designated tasks faster (Alwardt & Schörnig, 2021, p. 301) and better than human operators. It is a foregone conclusion that an AI-enabled shooting system would fare better than human snipers. This success may in all likelihood create an over-trust on behalf of human soldiers, especially once they have a series of successful operations under their belts (Johnson, 2023). This emerging trust can lead to the acceptance of computers as an “heuristic replacement of vigilant information seeking and processing” (Goddard et al., 2012). From that point onward, LAWS will in all likelihood be perceived as an all-knowing soldier, the solutions of which should be applied without delay for the sake of military success. As Kelman (1973) stresses, though for slightly different hierarchical relations, “authorization obviates the necessity of making judgments or choices”. When applied to human-AI interactions, the human operators will follow the AI generated solutions as if they were orders from a seasoned veteran, with an underlying belief that they have no other options. The emergence of LAWS as an authority figure will cause human personnel to view the solutions/suggestions generated by them as inherently carrying an automatic justification (Kelman, 1973, p. 39). Attached to this catapulting of LAWS to quasi-omnipotent battle figure status, is the dangerous human trait that “the individual does not see themselves as personally responsible” but feels obliged to follow LAWS-generated instructions (Kelman, 1973, p. 39).

Closely related to authorization is the so-called routinization. Kelman clearly underlines the importance of this development as it is observed in genocidal bureaucracies among others. However, there is an inherent resemblance between how bureaucracies and AI- powered militaries kill, and it is this highly rationalized, well-planned and calmly implemented series of acts and transactions that aim to kill and weaken the enemy power. LAWS resort to kill after many years of research and development and millions of lines of codes. In addition, the work-cycle of a LAWS is a mathematically expressed construct, which saves its functions in again ones and zeroes, thereby reducing the deceased civilians as well as enemy soldiers to mere numerical figures. Any military superior will find it not only extremely difficult but also clearly redundant to audit these numbers and the preceding LAWS decisions leading to those kill numbers. This difficulty (if not impossibility) alongside putting unlimited faith in LAWS capabilities, in Kelman’s words “reduce[s] the necessity of making decisions” in lieu of a super-human comrade. Kelman warns about the transformation of “action into routine, mechanical, highly programmed operations” (Kelman, 1973, p. 46). The effect of roboticization was observed on human cadres in Kelman’s world. Today, however, real robots, algorithms and weapon systems are replacing humans. The entire system will now depend on mechanical and highly programmed operators despite the fact that they will be made of steel and software. What remains to be done by humans will be the vetting of military operations as well as the maintenance of autonomous war machines now that activities requiring sophisticated emotional and cognitive skills that were formerly exclusive to humans are progressively being supplanted by machines (Huseynova, 2024, p. 2). This author finds it hard to believe that such a responsibility list will be conducive to the idea of some military bureaucrats getting at times pre-occupied with the ethical implications of military actions. This soul-searching is especially difficult if the military bureaucrat is contributing to the war effort from a distance, and LAWS will only expand this remoteness between the soldier and the battlefield (Biggar, 2023; Johnson, 2023).

Lastly, dehumanization threatens any humanitarian restraints on violence. As already hinted above, operation of LAWS will reduce any deceased or wounded enemy personnel and/or civilians to numerical figures. This means that these individuals are being stripped of their identities, names and inserted into a mathematical formula to find out whether the LAWS in question filled its kill quota for that given day. However, “to accord a person identity is to perceive him as an individual, independent and distinguishable from the others” (Kelman, 1973). This means that LAWS’ stripping individuals of their identities and their right at least to be remembered as a unique person different from others is nothing but the latest version of dehumanization.

In light of the foregoing, employment of Kelman’s understanding of violence without moral restraints is an adequate way to address the ethical problems entrenched in the rampant pro-LAWS rhetoric. Alarmed by similar analyses though, a fail-safe was intended to plant into LAWS functioning by a plethora of states’ representatives. During the formal and other discussions on LAWS a pivotal concept emerged to this end, which is the so-called meaningful human control (MHC).

MHC is presented to be a key solution to almost every problem brought about by the introduction of LAWS to the battlefields (Chapa, 2024; Emery, 2021). Is one worried about an emerging impunity gap due to the deployment of LAWS? Would constant use of LAWS eventually and unavoidably cause violations of humanitarian law? MHC has been presented as both, a solution to get rid of the impunity gap as well as to function as a fail-safe since the supervision of human operators is believed to prevent future transgressions via LAWS of humanitarian law. In the latest draft convention an article mentions that LAWS “may only be developed such that their effects in attacks are capable of being anticipated and controlled as required in the circumstances of their use, by the principles of distinction and proportionality” (Draft Articles on Autonomous Weapon Systems, 2024). As can be discerned, placing LAWS under human control seems to have been granted a central position for the like-minded states in the functioning of LAWS in order to see to that law of armed conflict shall be upheld. It is also obvious that “militaries have no interest in losing or no longer being able to execute situational control over their own units” (Alwardt & Schörnig, 2021, p. 301). Control implies that the human operator must have contextual awareness, active cognitive participation, and sufficient time for full deliberation about the target and the attack (Crootof, 2016a).

However, important factors like automation bias have been instrumental in highlighting how insufficient any MHC will be (Coco, 2023; Goddard et al., 2012; Güneysu, 2024). This deficiency will only be aggravated by the use of generative AI, which will ultimately opt to change its original algorithm, i.e. any human-planted humanitarian safeguards into the algorithm of the system concerned will come face to face with immediate overriding by the AI the moment it deems fit to recreate itself with a view to reaching the pre-determined algorithmic, statistically-designated kill quotas. Let us assume for a moment that a generative AI finds it most appropriate to kill high school students to prevent them from being drafted into their national armed forces in a year time or two and implements necessary changes in its own programming to this end. In such cases it may be too late for a human operator to intervene, since the LAWS do and will kill rapidly and very efficiently (Alwardt & Schörnig, 2021, p. 299). Besides, given the opaque nature of the inner-processes of a LAWS especially during its own entanglement with its programming to be amended, the human controller and/ or supervisors can be oblivious to the risks being generated (Crootof, 2016b; Herzfeld, 2022). What the author wishes to stress is that any human control will in all likelihood be just too little and too late to be meaningful.

It is the opinion of this author that the international community attaches too great a significance to MHC as a humanitarian solution to LAWS-related risks. The very first group of these risks will be that LAWS could at times prove to be unable to follow cardinal principles of international humanitarian law, such as the principles of distinction and of proportionality (Santoni de Sio & van den Hoven, 2018; Tzoufis & Petropoulos, 2024). This failure may be stemming from the actual level of technological capabilities like sensors etc. However, there could be some other cases in which LAWS run on defective systems or have broken algorithms due to self-inserted changes in the programming. Furthermore, LAWS are usually tested in structured environments. Per the usual modus operandi, a factor is changed with each and every experimentation. Yet, the notorious fog of war will always be a relevant factor that may cause misrepresentations of the facts on the ground in the decision-making cycle of a LAWS, paving the way for violations of law. As is the case for any AI-enabled systems (LaCroix & Luccioni, 2025, p. 4037), such contingencies are impossible to foresee and in cases where they occur, the international criminal system is basically devoid of any means to attribute the culpability to anyone, since especially mens rea, i.e. a guilty state of mind, simply does not exist. In cases where a human operator is deploying a clearly defective system willingly and knowingly, even then it will be a challenging uphill battle to prove this cruel state of guilty mind on behalf of the personnel concerned given the opaque nature of LAWS.

In addition to legal transgressions caused by LAWS, there is a very strong claim about the wrongness of allowing autonomous machines to decide whether or not to kill human beings in a given instance of hostilities (Güneysu, 2022; Santoni de Sio & van den Hoven, 2018). This latest objection is of an ethical nature and deals firstly and usually with the ethicality of this delegation of mortal authority. According to those who hold this view, it is unethical to leave it to the autonomous machine to decide which life ends, especially in light of the fact that LAWS are simply “incapable of empathy or of navigating in dilemmatic situations” (Rosert & Sauer, 2019). Likewise, reducing human lives to simple and meaningless clusters of computational data denoted in numerical values should be seen as a violation of respect for human life and human dignity. Though utterly relevant with military ethics, this paper will shy away from tackling this obviously very important ethical question of delegating the ultimate decision to LAWS, since it deserves its own dedicated investigation.

Lastly, deployment of LAWS is nothing but the pallbearer of “rules-based international order” in the sense that the whole issue of accountability and responsibility will be jettisoned in exchange of a darker international reality where impunity will be the name of the game due to various factors at play. The opacity of systems LAWS work on is one of these impunity-enhancing factors. Moreover, this opacity could be just another incentive for members of armed forces all over the world to close their eyes to a violation by others of humanitarian rules and values. Such an act would still be punishable by law, however criminal law problems that would be created by such instances are, as already implied, just too technical and complicated for the international criminal justice system to cope with (Crootof, 2016b). This can only come to mean that the impunity gap is the most probable outcome of increasing autonomy in critical military functions.

Programming distinction on the battle theatre would be challenging, since it demands an extensive level of context sensitivity (Herzfeld, 2022, p. 78). However, a legally sound deployment of LAWS as a method of warfare necessitates such a proper programming. LAWS are one of the most striking examples of “remote attack technologies” (Boothby, 2018, p. 24). Unmanned combat aerial vehicles (UCAVs) are another class of weapons or means of warfare that fall under the category of remote assault technologies. UCAVs are to be subsumed under the more specified sub-group of “remotely piloted aircraft” (RPAs). RPAs are such “aircraft piloted by an individual who is not on board” (Dinstein & Dahl, 2020). “The controller of an RPA may occupy a control station distant from the RPA’s area of operation. From that control station the controller employs computerized links with the RPA to guide it and monitors the output of its sensors” (Dinstein & Dahl, 2020, p. 32). UCAVs do not necessarily qualify as LAWS. As mentioned, they are under the complete control of an operator (Frølich & Mansouri, 2021, p. 51), who is using these weapons as their extension to initiate lethal attacks on targets in the luxury of a very secure military base.

American Armed Forces used UCAVs in the so-called signature strikes in, especially, Pakistan and Afghanistan (Herzfeld, 2022). Such strikes have targeted “groups of men who bear certain signatures, or defining characteristics associated with terrorist activity, but whose individual aren’t necessarily known” (Bellaby, 2021; Heller, 2013). This is a type of strike that is wholly different from a personality strike because the identity of the soon-to-be-attacked individuals does not have any importance attached to it; it is just critical they display a certain type of predetermined behaviour to qualify as military targets (Boyle, 2015, p. 114; Herzfeld, 2022). In this qualification process, pattern recognition algorithms play a conclusive role (Bellaby, 2021).

In order to comprehend and construct why MHC will be a poor solution to all of these pro-humanitarian concerns, understanding signature strikes is crucial. However, it must be stressed again that signature strikes are habitually executed by remotely-piloted UCAVs, not by completely autonomous systems. The critical decisions pertaining to targeting and engagement are humans’ to render. Yet there are some significant similarities.

Firstly, although the ultimate say belongs to human operators, these decisions are dependent upon sensors integrated into drones. Human-machine interaction has a role to play in this UCAV related setting just like it does in connection with LAWS. Secondly, the distance of the operator to the battlefield is immense, which is very similar to that created by the deployment of LAWS (Hovd, 2023, p. 9), even though the former will be smaller than the latter since human operators have to create a nexus with the developing military scene to decide when and whom to attack whereas this nexus will be absent in future autonomous warfare. Despite this relative proximity, signature strikes have had disastrous humanitarian reviews (Vlad & Hardy, 2024).

Finally, and most importantly, signature strikes are facilitated following an analysis of some commonly observed traits that link a certain real person to terrorist activities, since these traits were also observed in former cases of terrorism. The logic is, if a person discharges a certain task in the fashion a terrorist had done before (pattern of life), this person must be a terrorist, as well. This logic is nothing but an algorithmic evaluation of or a mining in a previously collected and created data-base. Vlad and Hardy (2024, p. 1213) reminds us that “throughout the 2000s, intelligence support to targeting operations grew to include complex data networks, including thousands of cross-referenced data points. Data networks comprise human and signals intelligence, logistical and financial data, and sophisticated pattern of life (POL) analysis”. In this sense, the similarity between signature strikes and the way LAWS operate is noteworthy.

There are some aspects of signature strikes that make this mode of warfare more amenable to humanitarian safeguards than completely autonomous warfare. The most important factor is the existence of a pilot who decides about the final lethal attack. In addition to some other personnel from the Air Force as well as other branches of military as well other intelligence agencies of civilian nature, there are almost always more than one pair of eyes to evaluate the incoming feed from the drone. Some of these actors do happen to be in the same operating room as foreseen by the operational procedures. However, some of them monitor the shared stream of ongoing hostilities or operations from other localities, theoretically making possible a saner evaluation of the whole situation at hand, since a distance between the human operators and/or personnel should insulate persons from the influence of a dominant albeit faulty interpretation of the actual developments in the operation room. This insulation is potentially supposed to cause the prevention of mistakes committed by the operators. As already mentioned however, this has not always been the case and US personnel have attacked wedding and other crowded gatherings of peaceful nature and killed a high number of individuals who were not taking part at the hostilities (Herzfeld, 2022).

The human contribution to the decision loop is evidently pivotal in signature strikes, whereas in LAWS’ case it does converge to zero. Bearing in mind the criticism the former receives in the wake of the lethal mistakes committed, there is literally no reason to remain optimistic about how the latter will fare in the humanitarian law department. This author is of the opinion that failures in signature strikes should be seen as an important wake-up call as to the unreliability of LAWS in warfare.

Another noteworthy aspect of the increasing rate of deployment of LAWS is the so-called moral deskilling. LAWS have an impact of weakening human moral agency (Schwarz, 2021). There is a tendency of imagining and framing ethical practices in technological terms, as digital infrastructures and interfaces assume importance in the military landscape (Kim, 2025; Schwarz, 2021). It is inevitable that humans tend to lend an exaggerated dose of trust to AI- enabled systems specifically and technology in general. When personnel are slotted to work in tandem with state-of-the-art systems, they are forced to use speed and optimization logic for moral reasoning, which de-skills them as moral actors (Schwarz, 2021).

Kim (2025) stresses that an indiscriminate allocation of judgment-requiring tasks to AI-enabled systems leads to three things. Firstly, such a step obscures the locus of responsibility at the individual and societal levels, which in turn ends up causing rampant responsibility evasion. Secondly, core human cognitive and ethical judgment abilities erode over time, i.e. moral deskilling is to be observed. Finally, with the erosion of moral and cognitive capabilities, even vocational standards and best practices, this embryonically personal loss will spill over to the societal level and the whole society may eventually descend into an Arendtian state of "thoughtlessness," also known as "Eichmannization” (Kim, 2025).

Deskilling as a term was first coined by Braverman to tackle and/or analyse problems created by the automatization of factory production lines (Braverman, 1974). The term "deskilling" has been used to describe how the economic devaluation of practical knowledge and skill sets traditionally developed by machinists, artisans, and other highly skilled individuals was caused by twentieth-century breakthroughs in machine automation (Vallor, 2014b). According to Braverman, the workplace became increasingly dominated by Taylorist methods of work organization, which represent a continuation of managerial control and the progressive separation of job conceptualization (Guha & Galliott, 2023). Braverman opined that this dehumanization of production will end up devaluing many artisanal skills and expertise of human beings, which will impoverish them even further. Based on a Marxist interpretation of how capitalism divides specialized labour to reduce costs, it was intended to observe how all the efforts to boost output and managerial authority may end up with a pauperization of the masses.

Deskilling was originally geared towards explaining how workers lost their jobs due to automation, however it can be applied within the context of humans being augmented by AI-enabled systems in their professional activities, including but not limited to the military realm (Huseynova, 2024, p. 3). Vallor has adeptly employed a deskilling approach in the context of military human-machine interactions (Vallor, 2013, 2014a, 2014b). Her approach has met some well-grounded yet rare criticism (Biggar, 2023; Guha & Galliott, 2023) but it still offers great insights as to the moral aspects of the deployment of LAWS.

The force of the Vallorian deskilling analysis stems from the fact that it is based upon a well-established ethical school of virtue ethics. However, there are some other bright spots of her preoccupation with deskilling and LAWS. Vallor offers a convincing framework to raise awareness as to the risks brought about by LAWS, stressing in the process the endangered ethical know-how of humanity that may be irretrievably lost. Her theory is both descriptive and prescriptive, that is, it offers valuable insights for a future regulation of LAWS. Besides, what she warns about has, to a very considerably wide extent, been upheld by scientific studies, albeit usually via studies on various civilian sectors (Emery, 2021; Johnson, 2023). For instance, Rinard used deskilling admirably while engaging with the phenomenon in the nursing profession (Rinard, 1996). She managed to convincingly highlight how massive technological changes brought about fragmentation and deskilling in the said profession (Guha & Galliott, 2023).

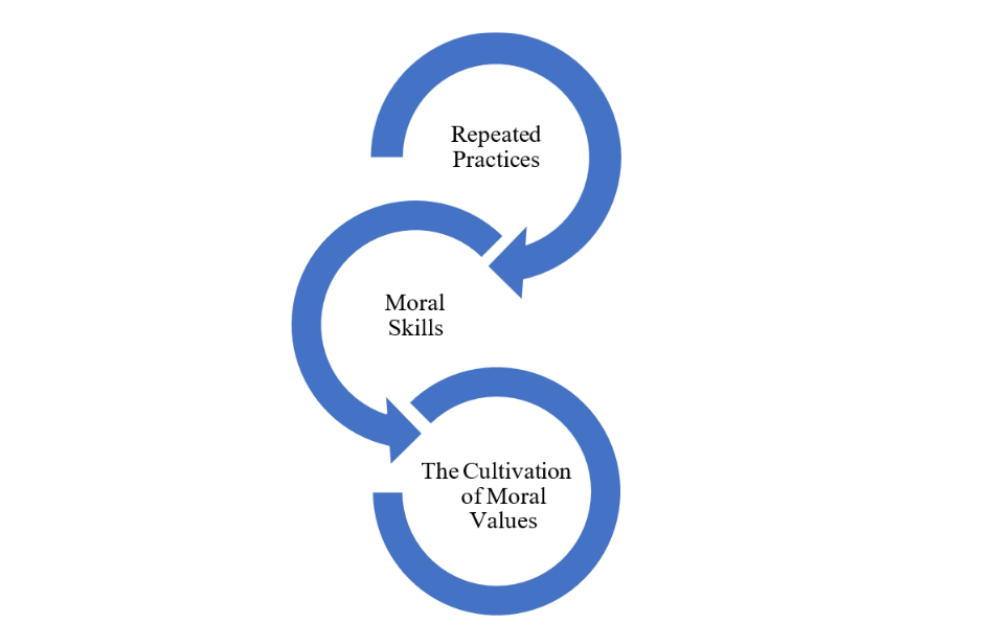

Biggar claims that augmentation of military tasks by AI will not cause a deskilling automatically but rather a reskilling. Soldiers will now be “differently skilled” in his words. Guha and Galliott start their well-researched paper with a thorough handling of Vallor’s theory, wherein they call her expectation of a rising military deskilling of a moral nature as “speculatively observed”. It is difficult to fully agree with these arguments. Having a downgrade in vocational and/or moral departments is not really different from of “being differently skilled” since deskilling contains such cases of lesser level skills acquired now on the job. Guha and Galliott could have been justified in labelling Vallor’s observation as speculation solely on the military affairs front, if this trait were not well-documented in other sectors. A repetition of this trait in the military department is only too natural to be observed. Vallor voiced her concerns about deskilling occurring in military affairs unless precautionary steps are taken in this world of increasingly automated militaries (Vallor, 2013). Without such precautions, Vallor warns adamantly, a dangerous moral deskilling of the whole military profession will come knocking on our doors (Vallor, 2013). Vallor remains Aristotelian in her understanding of virtue ethics. Habituation plays a big part, if not the only part, in the acquisition of virtues as illustrated by the Figure 1. What is meant by that is that virtues are developed via repeated practices, that create moral skills which are in turn apt to cultivate those virtues (Vallor, 2013, 2014b).

In every military situation there may be a feature of that specific instance that may be morally salient (Rebera & Wood, 2025). This feature must be the occasion that calls for a moral inspection on behalf of the military personnel, and makes them analyse the situation in an ethical way. This introspection among others may be a factor to hone the military virtues and this very entanglement of the personnel with the morally salient feature of the emerging battle scene is a requisite in this sense, if military virtues are to be preserved.

Figure 1. Habitual Acquisition of Virtues

Killing as a rationally-organized bureaucratical matter requires “considerable moral skill” (Vallor, 2014b). This is a particularly valid proposition as regards the militaries of today. However, the addition of unfathomable calculational swiftness and operational algorithmic precision will make this moral skill even more desirable (Vallor, 2014b). In apparent contradistinction with this apparent need, the rampant deployment of robot killers will bring with it a slacking-off impact (Güneysu, 2024) as well as an erosion of an on-the- job-instilled pursuit of ethicality. As autonomy grows, so will the overconfidence in its algorithmically guaranteed success in its solutions. This overconfidence is another factor that will cause the dilution of military ethics, since human operators will find it redundant to morally inspect those AI-enabled solutions.

The findings of Crowston and Bolici (2025) seem to be in strict accordance with those of Vallor. They, too, warn their readers about deskilling. The problem gets even more grave with the introduction of generative AI in the decision-making processes with certain judgement calls. Deskilling causes the work reserved now for humans to require a much lower level of skill. Humans lose the skills that they normally acquire through experimentation (Crowston & Bolici, 2025). Thus, already acquired skills are substantially eroded and new recruits can only be trained to a new and lower level of moral readiness.

An erasure of military virtues has actually been on display in Gaza, where the Israeli Defence Forces (IDF) personnel have lacked a disciplined and principled approach to their own function in the said area during the hostilities, ridiculing in the process the deceased as well as the aggrieved civilians, a modus operandi certainly incompatible with the doctrination of any decent armed forces, including the IDF.

It has been obvious to outsiders that there is an immense asymmetry between the warring parties in this latest conflict. The knee-jerk reaction to this unprofessional behaviour would be to blame it on this unbridgeable asymmetry as well as the lack of real and perilous combat experience for a vast majority of IDF operators due to distance created by the IDF’s technological superiority. This technological supremacy and the distance created between the parties in favour of IDF elements are definitely among the reasons for those reported cases of unethical and unsoldierly conduct. However, automation has also played a huge role in the Israeli warfare. This conflict then could be a painful but sobering learning ground as to what the real ramifications of LAWS are going to be like.

Grassiani and Gazit (2024) claim that Israel is undergoing a de-democratization process as its governments progressively undermine democratic principles and institutions. This de-democratization goes hand in hand with the “normalization of violence” (Grassiani & Gazit, 2024). This tendency is obvious not only in domestic affairs but also in another setting, i.e. Palestinian territories under Israeli occupation. Negative attitudes toward an excluded group are obviously strongly influenced by perceived threat, especially when competing for limited resources as well as labelling the others as a people of inferior qualities (Maoz & McCauley, 2008, p. 95). A blatant disregard for humanitarian values has been incessantly put on display by the Israeli military personnel and very little has been done to respect and uphold the international law of armed conflict.

As Nijim (Nijim, 2024, p. 18) cogently puts it, “(t)he bureaucratic machine can coerce soldiers to take actions that may go against their will”. However, there is a very long list of instances documented by footages that IDF members carried out transgressions willingly and knowingly, for example, demolishing huge buildings and recording them so that this may be a birthday present for a soldier’s 2 year-old daughter (Nijim, 2024, p. 18). Israel is recognizing that some of its troops are too readily bellicose and the IDF is making every effort to address this issue (Grassiani, 2023). The performance of the IDF in terms of observing humanitarian law is hitherto reportedly a sub-par one, to put it mildly. However, there is another element in play that jeopardizes the humanitarian values even further. It is the deployment of AI-enabled systems especially in the targeting phase of the military decision-making cycle (Lahmann, 2025).

The IDF top brass wanted to react to the shocking HAMAS operation by eliminating power targets (matarot otzem) with a view to creating havoc amidst the Palestinian civil society (Abraham, 2023). This is already a violation of the law of armed conflict, as it is prohibited to terrorize civilians during hostilities. Extensive use of a system called The Gospel carried this faulty plan to vicious extremes with its AI-powered capability to generate targets at rates never seen before (Abraham, 2023).

This novel tool that can quickly evaluate enormous volumes of data to produce thousands of possible "targets" for military attacks during a conflict is a dream come true for any military decisionmaker, be it on a strategical or a tactical level. As Yuval Abraham reminds us, such a machine (or rather a program) is already in use by the IDF (Abraham, 2024). Abraham vividly reports the automatic acceptance of AI-generated decisions by IDF personnel, which also happens to support the gist of this paper, in the following excerpt on another similar target-generating system called Lavender:

“During the early stages of the war, the army gave sweeping approval for officers to adopt Lavender’s kill lists, with no requirement to thoroughly check why the machine made those choices or to examine the raw intelligence data on which they were based. One source stated that human personnel often served only as a “rubber stamp” for the machine’s decisions, adding that, normally, they would personally devote only about “20 seconds” to each target before authorising a bombing — just to make sure the Lavender-marked target is male.”

The fact that the army gave sweeping approval for AI generated solutions hints at a weakening of control and restraint on the IDF side. That the soldiers turned into automatons in an approval craze is a very serious warning. Chris Gray (2025, p. 53) points to this blinding craze with the following:

“In the past, Israel went to some lengths to minimize the number of civilian casualties; since October 7, 2023, thanks to the targeting permission of the Gospel and other software, it will destroy an apartment block killing dozens of civilians to kill one low-level fighter, who probably isn’t at home anyway, but his family is.”

Here the point should be made about a culture that has been a dominant feature of the IDF. All the reported breaches of humanitarian law cannot be exclusively attributed to personal mistakes or transgressions. Alarmingly, “rules of engagement and systematic oppression” seems to be lying at the heart of the problem (Spitka, 2023, p. 61). Following some cases of attacks on IDF personnel in previous years, the IDF has grown very suspicious of masses gathered for protests and has established a very tough set of rules as regards such situations in which they are habitually supposed to get in contact with civilians (Spitka, 2023) This, too, must have played a role in a faster adoption of autonomously generated decisions by the IDF. Against this background, despite all the rosy presentations of LAWS rampant in academia and elsewhere, the level of AI-induced harm inflicted upon civilians in Gaza is simply unparalleled (Rosen, 2023). Evidence shows that the IDF’s AI programs are not militarily effective; they have been rather instrumentalized as covers for wanton destruction (Gray, 2025).

Clearly, what has happened in Gaza is the perfect example of a moral exclusion. In a case of moral exclusion, dehumanized people live beyond the boundaries of moral values, accountability and practice (Cohen, 2015). Further research is needed to establish how much of this dehumanization is attributable to the deployment of LAWS and other AI-enabled systems. Yet, it is obvious that they do not have a pro-humanitarian impact, at all. A claim to have observed embryonical samples of moral deskilling among the IDF ranks seems to be justifiable in light of the reported cases of ridicule and total lack of restraint on behalf of the IDF rank and file.

The implementation of international humanitarian law is already a very delicate issue, in which many interests, natural tendencies, bureaucratic practices, and the so-called fog of war have the propensity to militate against the proper upholding of such rules, in letter and in spirit. Unfortunately, the increasing usage of AI-enabled systems makes the matter even more fragile. The usual focus of those humanitarian studies on the deployment of LAWS generally probes into the immediate violations of humanitarian law. However, there is another inclination or process at work, which is moral deskilling. Moral deskilling can be defined as the rendering obsolete of soldiers' and other military personnel's moral abilities and acquired ethics-related professional habits. Moral deskilling could be a factor that will write into stone the much-lamented lack of humanitarian care and restraint and which will help perpetuate a state of anarchy and an ensuing culture of impunity. Thus, the emergence of a new type of military culture shall be facilitated, upon which humanitarian concerns have no influence at all. This should be seen as a cultural appropriation of violence with a no-holds-barred attitude that may give rise to the domination of a new type of systemic violence that puts at risk every humanitarian gain achieved so far. The principles of distinction and proportionality shall be the first casualties if such a change occurs. In addition, international criminal justice will be severely hampered with all kinds of problems attached to or already inherent in the way LAWS operate.

Deskilling can be explained making use of Kelman’s take on “violence without moral restraint”. Authorization, routinization and dehumanization each plays an important role in the commission of sanctioned massacres without any qualms on behalf of the cadres on the field, since decision-making, as well as any kind of responsibility issues as regards this decision- making seem to them as matters utterly out of their professional ambit. It is a foregone conclusion that LAWS shall be deemed as superior soldiers. Out of this authority an inattentive mentality emerges, which is reminiscent of Eichmann’s. In turn, human operators and personnel will see themselves as happy automatons, whose only responsibility will be to follow AI-generated decisions. Such routinization will force the individual to concentrate solely on the most immediate task at hand, which will be but a minor part of the whole operation. This tunnel-vision will make it extremely difficult to conduct a moral scrutiny of the complete process. Finally, dehumanization is the reduction of enemy personnel or (in the case of law enforcement) of suspects into mere statistical figures, blurring any retrospective or in situ humanitarian evaluation as to the operation. This contributes further to the erosion of military related virtues.

The international community has tried to cope with this existential threat by championing the concept of MHC. Nevertheless, this control will be either too late or too little in the face of the opacity of LAWS’ modus operandi and of the increasing need to speed up almost any military decision after the introduction into the battlefields of AI-enabled systems, regardless of whether they are LAWS or mere decision-support systems. Even in signature strikes where human control in critical military affairs is available, there have been reported cases of wrongdoings. There are conspicuous similarities between signature strikes and LAWS. These similarities leave very little to remain optimistic about when considering the future humanitarian record of AI-enabled military operations.

Declaration of Interest Statement: The author acknowledges that there is not any actual or potential conflict of interest to report.

References

Abraham, Y. (2023, November 30). A mass assassination factory’: Inside Israel’s calculated bombing of Gaza. +972 Magazine. https://www.972mag.com/mass-assassination- factory-israel-calculated-bombing-gaza/

Abraham, Y. (2024, April 3). ‘Lavender’: The AI machine directing Israel’s bombing spree in Gaza. Balfour Declaration. https://balfourproject.org/lavender-the-ai-machine-directing-israels-bombing-spree-in-gaza/

Alwardt, C., & Schörnig, N. (2021). A necessary step back? Zeitschrift Für Friedens- Und Konfliktforschung, 10(2), 295–317. https://doi.org/10.1007/s42597-021-00067-z

Arai, K., & Matsumoto, M. (2023). Public perceptions of autonomous lethal weapons systems. AI and Ethics. https://doi.org/10.1007/s43681-023-00282-9

Bellaby,R.W.(2021).CanAIweaponsmakeethicaldecisions?CriminalJusticeEthics, 40(2), 86–107. https://doi.org/10.1080/0731129X.2021.1951459

Biggar, N. (2023). An ethic of military uses of artificial intelligence: Sustaining virtue, granting autonomy, and calibrating risk. Conatus, 8, 67–76. https://doi.org/ 10.12681/cjp.34666

Bode, I. (2024). Emergent normativity: Communities of practice, technology, and lethal autonomous weapon systems. Global Studies Quarterly, 4(1), 1-11. https:// doi.org/10.1093/isagsq/ksad073 Boothby, W. (2018). Dehumanization: Is there a legal problem under Article 36? In W. Heintschel

von Heinegg, R. Frau, & T. Singer (Eds.), Dehumanization of Warfare: Legal Implications of New Weapon Technologies (pp. 21–52). Springer International Publishing. https://doi.org/10.1007/978-3-319-67266-3_3

Boshuijzen-van Burken, C., Spruit, S., Geijsen, T., & Fillerup, L. (2024). A values-based approach to designing military autonomous systems. Ethics and Information Technology, 26(3), 56. https://doi. org/10.1007/s10676-024-09789-z

Boyle, M. J. (2015). The legal and ethical implications of drone warfare. The International Journal of Human Rights, 19(2), 105–126. https://doi.org/ 10.1080/13642987.2014.991210

Braverman, H. (1974). Labor and monopoly capital: The degradation of work in the twentieth century. Monthly Review Press.

Chapa, J. (2024). MilitaryAI ethics. Journal of Military Ethics, 23(3–4), 306–321. https:// doi.org/10.1 080/15027570.2024.2439654

Coco, A. (2023). Exploring the impact of automation bias and complacency on individual criminal responsibility for war crimes. Journal of International Criminal Justice. https://doi.org/10.1093/ jicj/mqad034

Coeckelbergh, M. (2013). Drones, information technology, and distance: Mapping the moral epistemology of remote fighting. Ethics and Information Technology, 15(2), 87–98. https://doi. org/10.1007/s10676-013-9313-6

Cohen, S. J. (2015). Breakable and unbreakable silences: Implicit dehumanization and anti- Arab prejudice in Israeli soldiers’ narratives concerning Palestinian women. International Journal of Applied Psychoanalytic Studies, 12(3), 245–277. https:// doi.org/10.1002/ aps.1461

Crootof, R. (2016a). “A meaningful floor for “Meaningful Human Control”. Temple International and Comparative Law Journal, 30(1), 53–62. https://ssrn.com/abstract=2705560

Crootof, R. (2016b). War torts: Accountability for autonomous weapons. University of Pennsylvania Law Review, 164, 1347–1402. https://heinonline.org/HOL/P?h=hein.journals/pnlr164&i=1375 Crowston, K., & Bolici, F. (2025). Deskilling and upskilling with AI systems. Information Researchan

International Electronic Journal, 30(iConf), 1009–1023. https://doi.org/ 10.47989/ir30iConf47143 deVries,B.(2023).Individualcriminalresponsibilityforautonomousweaponssystemsin International Criminal Law. Brill | Nijhoff.

Dinstein, Y., & Dahl, A. W. (2020). Section III: Remote and autonomous weapons. In Y. Dinstein & A. W.Dahl(Eds.),OsloManualonSelectTopicsoftheLawofArmed Conflict: Rules and Commentary (pp. 31–42). Springer International Publishing. https://doi.org/10.1007/978-3-030-39169-0_3

Draft Articles on Autonomous Weapon Systems – Prohibitions and Other Regulatory Measures on the Basis of International Humanitarian Law (“IHL”) Submitted by Australia, Canada, Estonia, Japan, Latvia, Lithuania, Poland, the Republic of Korea, the United Kingdom, and the United States, CCW/GGE.1/2024/WP.10 (2024).

Emery, J. R. (2021). Algorithms, AI, and ethics of war. Peace Review, 33(2), 205–212. https://doi.org/1 0.1080/10402659.2021.1998749

Frølich, M., & Mansouri, M. (2021, April 18). Ethical dynamics of autonomous weapon systems. ICONS 2021:The Sixteenth International Conference on Systems, Porto, Portugal.

Goddard, K., Roudsari, A., & Wyatt, J. C. (2012). Automation bias: A systematic review of frequency, effect mediators, and mitigators. Journal of the American Medical Informatics Association, 19(1), 121–127.https://doi.org/10.1136/amiajnl-2011-000089

Grassiani, E. (2023). Instrumental morality under a gaze: Israeli soldiers’ reasoning on doing ‘Good’. In E.-H. Kramer & T. Molendijk (Eds.), Violence in Extreme Conditions: Ethical Challenges in Military Practice (pp. 59–72). Springer International Publishing. https://doi.org/10.1007/978-3-031-16119-3_5

Grassiani, E., & Gazit, N. (2025). Civilian ‘soft’militarism through informal education in Israel: learning to protect and connect to the land. Critical Military Studies, 11(1), 39-58. https://doi.org/1 0.1080/23337486.2024.2349365

Gray, C. H. (2025). AI, sacred violence, and war—The case of Gaza. Palgrave Macmillan Cham.

Guha, M., & Galliott, J. (2023). Autonomous systems and moral de-skilling: Beyond good and evil in the emergent battlespaces of the twenty-first century. Journal of Military Ethics, 22(1), 51–71. https://doi.org/10.1080/15027570.2023.2232623

Güneysu, G. (2022). Otonom silah sistemleri: Bir uluslararası hukuk incelemesi. Nisan Kitapevi. Güneysu, G. (2024). Lethal autonomous weapon systems and automation bias. Yuridika, 39(3), 395 – 424. http://dx.doi.org/10.2139/ssrn.5343244

Haner, J., & Garcia, D. (2019). The Artificial intelligence arms race: Trends and world leaders in autonomous weapons development. Global Policy, 10(3), 331–337. https://doi.org/10.1111/1758-5899.12713

Heller, K. J. (2013). ‘One hell of a killing machine’: Signature strikes and international law.Journalof International Criminal Justice, 11(1), 89–119. https://doi.org/10.1093/ jicj/mqs093

Herzfeld, N. (2022). Can lethal autonomous weapons be just? Journal of Moral Theology, 11(Special Issue 1), 70–86. https://doi.org/https://doi.org/10.55476/001c.34124

Heuser, S., Steil, J., & Salloch, S. (2025). AI ethics beyond principles: Strengthening the life-world perspective.ScienceandEngineeringEthics,31(1),7.https://doi.org/ 10.1007/s11948-025-00530-7

Hovd, S. N. (2023). Tools of war and virtue–Institutional structures as a source of ethical deskilling. Frontiers in Big Data, 5. https://doi.org/10.3389/fdata.2022.1019293

Huseynova, F. (2024). Addressing deskilling as a result of human-AI augmentation in the workplace. 7thConferenceonTechnologyEthics(TETHICS2024),Tampere,Finland.

Johnson, J. (2024). Finding AI faces in the moon and armies in the clouds: anthropomorphising artificial intelligence in military human-machine interactions. Global Society, 38(1), 67-82. https://doi.org/ 10.1080/13600826.2023.2205444

Kelman, H. G. (1973). Violence without moral restraint: Reflections on the dehumanization of victims and victimizers. Journal of Social Issues, 29(4), 25–61. https://doi.org/ 10.1111/j.1540-4560.1973. tb00102.x

Kim, J. (2025). The end of resonance and the future of human judgement in the age of AI: Ethical 286 deskilling, abdication of responsibility, and the quest for human-centric AI governance. https:// philarchive.org/rec/KIMTEO-20

LaCroix,T., & Luccioni,A. S. (2025). Metaethical perspectives on ‘benchmarking’AI ethics. AI and Ethics, 5(4), 4029–4047. https://doi.org/10.1007/s43681-025-00703-x

Lahmann, H. (2025). Self-determination in theage ofalgorithmic warfare. European Journal of Legal Studies, 16, 161–214. http://dx.doi.org/10.2139/ssrn.4587724

Maoz, I., & McCauley, C. (2008). Threat, dehumanization, and support for retaliatory aggressive policies in asymmetric conflict. The Journal of Conflict Resolution, 52(1), 93–116. https://www. jstor.org/stable/27638596

Nijim, M. (2024). Gazacide: Palestinians from Refugeehood to ontological obliteration. Critical Sociology, 51(7-8), 1581-1603 https://doi.org/10.1177/08969205241286530

Poszler, F., & Lange, B. (2024). The impact of intelligent decision-support systems on humans’ ethical decision-making: A systematic literature review and an integrated framework. Technological Forecasting and Social Change, 204, 1-19. https:// doi.org/10.1016/j. techfore.2024.123403

Rebera, A. P., & Wood, N. G. (2025). Virtues and rules in war: Military ethics and technologies of radical risk-reduction. Ethical Theory and Moral Practice, 28, 821-837. https:// doi.org/10.1007/ s10677-025-10515-x

Renic, N., & Schwarz, E. (2023). Crimes of dispassion:Autonomous weapons and the moral challenge of systematic killing. Ethics & International Affairs, 37(3), 321–343. https://doi.org/10.1017/ S0892679423000291

Rinard, R. G. (1996). Technology, deskilling, and nurses: The impact of the technologically changing environment. Advances in Nursing Science, 18, 60–69. https://doi/10.1097/00012272-199606000-00008.

Rosen, B. (2023, December 15). Unhuman killings: AI and civilian harm in Gaza. Just security [Blog]. Just Security. https://www.justsecurity.org/90676/unhuman-killings-ai-and-civilian-harm-in-gaza/

Rosert, E., & Sauer, F. (2019). Prohibitingautonomous weapons: Put human dignity first. Global Policy, 10(3), 370–375. https://doi.org/10.1111/1758-5899.12691

SantonideSio, F., & van den Hoven, J. (2018). Meaningful human control overautonomous systems: A philosophicalaccount. Frontiers in Robotics and AI, 5. https://www.frontiersin.org/articles/10.3389/ frobt.2018.00015

Sati, M. C. (2023). The attributability of combatant status to military ai technologies under international humanitarian law.Global Society,38(1), 122-138. https://doi.org/10.1080/13600826.2023.2251509 Schwarz, E. (2021). Silicon valley goes to war. Philosophy Today, 65(3), 549–569. https:// doi.org/10.5840/philtoday2021519407

Park, S. (2020). Analysis of the positions held by countries on legal issues of lethal autonomous weapons systems and proper domestic policy direction of South Korea. The Korean Journal of Defense Analysis, 32(3), 393–418. https://doi.org/10.22883/ KJDA.2020.32.3.004

Spitka, T. (2023). National and international civilian protection strategies in the Israeli- Palestinian conflict (1st ed.). Palgrave Macmillan Cham.

Tzoufis, V., & Petropoulos, N. (2024). The commission of crimes using autonomous weapon systems: Issues of causation and attribution. TATuP - Zeitschrift Für Technikfolgenabschätzung in Theorie Und Praxis, 33(2), 35–41. https://doi.org/ 10.14512/tatup.33.2.35

Vallor, S. (2013). The future of military virtue: Autonomous systems and the moral deskilling of the military. 2013 5th International Conference on Cyber Conflict (CYCON 2013), 1–15. https:// ieeexplore.ieee.org/document/6568393

Vallor, S. (2014a). Armed robots and military virtue. In L. Floridi & M. Taddeo (Eds.), The ethics of information warfare (pp. 169–185). Springer International Publishing. https://doi.org/10.1007/978-3-319-04135-3_11

Vallor,S. (2014b). Moral deskilling and upskilling in a new machineage: Reflections on the ambiguous future of character. Philosophy & Technology, 28, 107–124. https://doi.org/10.1007/s13347-014-0156-9

Vlad, R. O., & Hardy, J. (2024). Signature strikes and the ethics of targeted killing. International Journal of Intelligence and CounterIntelligence, 1–29. https://doi.org/ 10.1080/08850607.2024.2382029

Wood, N. G. (2024). Regulating autonomous andAI-enabled weapon systems: The dangers of hype. AI and Ethics, 4(3), 805–817. https://doi.org/10.1007/s43681-024-00448-z